【Practical】Lossless PDF Compression: Structural Optimization Solution Based on PyMuPDF

【Practical】Lossless PDF Compression: Structural Optimization Solution Based on PyMuPDF

In our daily work, we often encounter the problem of oversized PDF files: email attachment size limits, slow upload and download speeds, and excessive storage space usage. Traditional compression methods either reduce image quality, resulting in blurriness, or convert text into images, losing searchability, which is simply not worth it.

Today, I will share a lossless PDF compression solution—optimizing the internal structure of PDFs and cleaning redundant data using the PyMuPDF (fitz) library, effectively reducing file size while perfectly preserving text searchability and visual clarity.

I. Core Implementation Principle

Here is the core code, which is the key logic for implementing PDF structural optimization and compression:

import os

from pathlib import Path

import fitz # PyMuPDF

def compress_pdf_simple(input_path, output_path=None):

"""

Simple PDF compression method - only performs structural optimization (lossless)

Parameters:

input_path (str): Input PDF file path

output_path (str): Output PDF file path

Returns:

str: Output file path

"""

try:

# Open the PDF file

doc = fitz.open(input_path)

# Smartly generate output path (if not specified)

if output_path is None:

input_file = Path(input_path)

output_path = str(input_file.parent / f"{input_file.stem}_simple_compressed{input_file.suffix}")

# Core: Achieve lossless compression through parameter combination

doc.save(

output_path,

garbage=4, # Maximize cleaning of unused objects

deflate=True, # Use deflate lossless compression algorithm

clean=True, # Clean/optimize PDF internal structure

pretty=False # Compact output, remove whitespace characters

)

# Close the document to release resources

doc.close()

# Calculate compression information

original_size = os.path.getsize(input_path)

compressed_size = os.path.getsize(output_path)

compression_ratio = (1 - compressed_size / original_size) * 100

# Output compression results

print(f"✅ Simple compression completed!")

print(f"📄 Original file: {input_path} ({original_size / 1024 / 1024:.2f} MB)")

print(f"📦 Compressed file: {output_path} ({compressed_size / 1024 / 1024:.2f} MB)")

print(f"📉 Compression ratio: {compression_ratio:.1f}%")

print(f"🔍 Retain text searchability: Yes")

return output_path

except Exception as e:

print(f"❌ An error occurred during compression: {str(e)}")

return None

# Example call

# compress_pdf_simple("your_file.pdf")

The core of this solution is to utilize the four key parameters of PyMuPDF's save() method to achieve "slimming" of the PDF without modifying the document content itself.

II. In-Depth Analysis of Key Parameters

2.1 garbage=4: Precise Cleaning of "Orphan" Objects

PDF files contain a large number of indirect objects (pages, fonts, images, annotations, etc.), and deleting content during document editing does not immediately clean up the underlying references, leading to a buildup of "orphan objects."

| garbage parameter value | Cleaning Level | Applicable Scenarios |

|---|---|---|

| 0 | No cleaning | Quick save only, no compression needed |

| 1 | Clean obvious unused objects | Light optimization, prioritize compatibility |

| 2 | Deep check of reference relationships | Regular optimization, balance effect and safety |

| 3 | Aggressive cleaning | Maximize compression for simple PDF files |

| 4 | Maximum cleaning | Documents edited multiple times, need to verify integrity |

Process Flow: Traverse all indirect objects → Establish reference relationship graph → Delete unreferenced objects → Free up storage space.

Actual Effect: Documents edited multiple times can reduce size by 10-30%.

2.2 deflate=True: Universal Lossless Compression Algorithm

Deflate is the lossless compression algorithm recommended by the PDF specification (based on LZ77 + Huffman coding), with maximum compatibility, and almost all PDF readers can decode it.

When enabled, it compresses the following objects:

- Page content streams

- Font data streams

- Image data streams not compressed by other algorithms

2.3 clean=True: Optimize Document Structure

PDF consists of two parts: "document structure" and "content streams." The clean=True option specifically optimizes the structural part:

- Remove duplicate PDF objects

- Merge references of identical content objects

- Optimize page tree structure

- Clean redundant metadata information

Actual Effect: Multi-page documents can reduce size by 5-15%.

2.4 pretty=False: Compact Output

PDF is essentially a binary format. pretty=True retains indentation, line breaks, and other whitespace characters to enhance readability, while pretty=False removes all unnecessary whitespace characters, further reducing size (this has a supplementary effect on files already deflated, but it's better than nothing).

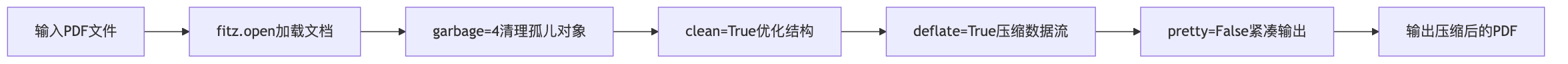

III. Complete Compression Process

IV. Technical Challenges and Solutions

4.1 Compression Ratio vs Document Integrity

Challenge: Aggressive garbage collection (garbage=4) may damage complex PDFs (containing scripts/forms).

Solution:

- Verify document integrity before compression

- Encrypted PDFs need to be decrypted before compression

- Use try-except to catch exceptions and avoid program crashes

4.2 Retaining Text Searchability

Challenge: Some compression solutions may convert text into images, making it unselectable/unsearchable.

Advantages of this solution:

- Does not modify text content and encoding

- Only optimizes object reference relationships

- Retains font object integrity

- Verification method: Test text selection functionality with a PDF reader after compression

4.3 Memory Management for Large Files

Challenge: PDFs of several hundred MB can easily lead to memory overflow.

Optimization Suggestions:

- Process large files in pages

- Close doc objects promptly to release resources

- Monitor memory usage and adopt streaming processing

4.4 Smartly Generating Output Paths

Utilize the pathlib library to automatically generate paths, maintaining the original file name + compression tag, ensuring cross-platform compatibility:

if output_path is None:

input_file = Path(input_path)

output_path = str(input_file.parent / f"{input_file.stem}_simple_compressed{input_file.suffix}")

V. Use Cases and Effect Estimation

5.1 Applicable Scenarios

- Academic documents: PDFs downloaded from arXiv often contain a lot of redundant information

- E-book archiving: Compressing saves storage space

- Document transmission: Pre-process before uploading to email/cloud storage

- Batch processing: Combine with Celery for automated compression

5.2 Effect Estimation

| Document Type | Typical Compression Ratio | Remarks |

|---|---|---|

| Pure text documents | 10-25% | Most significant effect |

| Contains many images | 5-15% | Only relies on structural optimization |

| Documents edited multiple times | 20-40% | Significant garbage collection effect |

| Scanned PDFs | 0-5% | Already in image format, limited optimization |

5.3 Precautions

- Backup original files: Compression is irreversible

- Verify content integrity: Comprehensive checks after compression

- Test printing effects: Avoid impacting output

- For large batch processing, a distributed architecture is recommended

VI. Comparison with Similar Solutions

| Compression Solution | Compression Ratio | Quality Loss | Text Searchability | Implementation Complexity |

|---|---|---|---|---|

| PyMuPDF Structural Optimization | 10-30% | None | Retained | Low |

| Image Quality Reduction Compression | 30-70% | Obvious | Retained | Medium |

| Re-encoding PDF | 20-50% | Possible loss | Possible loss | High |

VII. Performance Optimization Suggestions

- Batch Processing: Use multiprocessing to concurrently process multiple files, improving efficiency

- Progress Monitoring: Add progress callback functions to enhance user experience

- Incremental Compression: Only compress changed pages in the PDF to reduce repetitive operations

- Caching Mechanism: Record already compressed files to avoid duplicate processing

VIII. Conclusion

Key Points Review

- This solution is based on PyMuPDF's four core parameters:

garbage=4,deflate=True,clean=True,pretty=False, achieving lossless PDF compression; - The advantages of the solution are that it does not compromise document quality, retains text searchability, and is simple to implement with good compatibility;

- The compression effects vary significantly for different types of PDFs, with limited optimization for scanned documents, while pure text documents edited multiple times yield the best results.

This lossless PDF compression solution balances practicality and safety, with simple and easily integrable code, suitable for most document processing scenarios. If a higher compression ratio is needed, it can also be combined with lossless image compression (such as optimizing DPI), but care should be taken to balance effect and complexity.